However, some popular terminal fonts include Monospace, Consolas, and Inconsolata. Here was its response:Īs a language model, I do not have personal preferences or the ability to have a favorite font. I also like the default style the terminal has, called "Novel".įor the heck of it, I decided to ask ChatGPT. My favorite colors to use are pink or a nice aqua blue color.

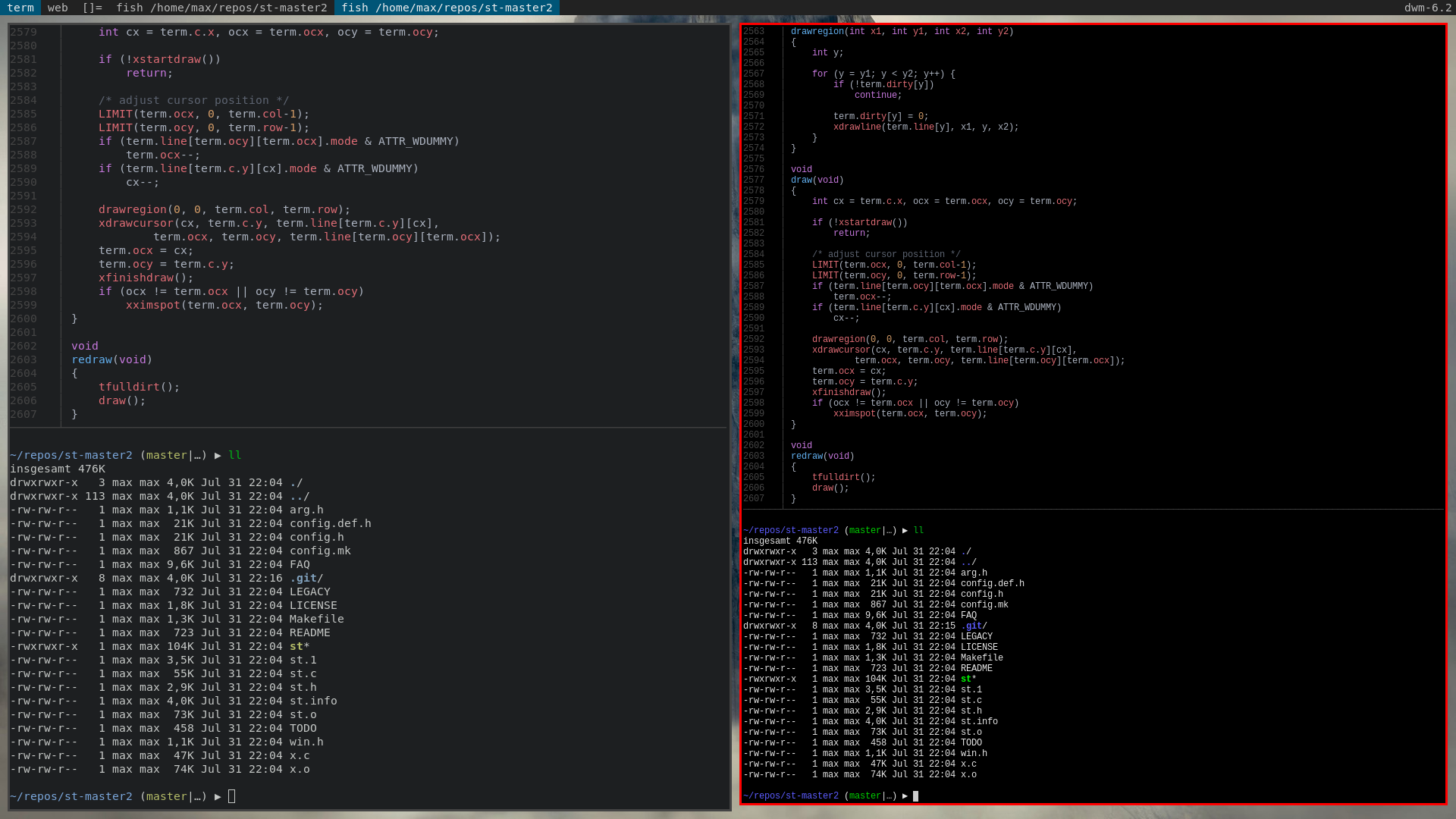

I just leave my terminal set to SF Mono font, but I make it bolder and bump it up a few points so I can see it better. I like to make my personal terminals pretty, but for work I leave it white on black and just make the font around 16 pt because I appreciate the larger font size. It was like a baby pink background with hot pink text. This reminds me of the time I pranked the other devs on my team by making all of their terminals pink (I was the only woman). Jenny Panighetti Keeping it pretty in pink I've gone through various fonts over the years, but I always try to use the same thing in my terminal as in my IDE.Īt the moment, Monaco regular at 16 pt (so much better than 12 or 14 pt fonts). If I could, anything work or code related would all use the same color scheme, and the same fonts, so it all looks uniform. It is possible that this could mess with floating point accuracy though.I also use the Solarized Dark color scheme for both my IDE and the terminal. I'm not sure why this is done but it shouldn't have any influence on scaling because it's a constant factor applied to all font sizes equally, so just halving the font size should result in the same behavior as not doubling the font size at all. If nothing else I hope this at least sheds a little more light on how font sizes are calculated with freetype.Īlso a little postscript: I'm not entirely sure why Alacritty's Size is double the actual font size. However I'd still like to know if the current font size calculation is actually correctly using the DPR.įrom what I can tell alacritty's current behavior doesn't seem toooo far off, there might still be some inaccuracies though, but I'd love to fix those if we can get some more info. Which makes sense because the DPR is smaller on your external monitor too. However looking at these calculations it seems like the font on your external monitor should be smaller, not bigger. I'd love to see what a println of each value used in this calculation would result in for your system It seems like the 12/13 could be a rounding/truncation error, however the 12 instead of 8 just seems a bit odd. Now don't get me wrong, it is likely that there was at least some kind of math error in my calculations, but it seems like the result for running this on your external monitor should not be Size(12). Size::new = size * 2 as i16 = 8 as i16 = 8 Size = size_f32_pts * resolution / 72 = 4 It looks like 7.5 is closer to what I want (13 pixels on big screen and 19 on small)Ĭonfiguration loaded from /home/niklas/.config/alacritty.ymlįinished initializing glyph cache in 0.040205583 I noticed that I can use partial font sizes as well. Maybe what I want is to be able to set the "maximum font size" so that it never rounds up? Without knowing the details of how this is implemented. So simply looking at the math I guess Alacritty is doing the right thing and rounds to the closest font size. If I shrink the font by one size on the small screen I get 18px heigh cells which looks good to me, however 18/13 = 1.38.

The small screen gives me 21 pixels heigh cells and the big one gives me 13.

device_pixel_ratio: 1.0833334 (as reported by alacritty.device_pixel_ratio: 1.6666666 (as reported by alaxritty).I think maybe it comes down to how the sizes are rounded. While the implementation + API migration will be done soon, I can't say how quickly that will make it downstream, since 1) ideally the changes will be well-tested before being released, 2) I'm also considering doing some work on glutin this time, and 3) I'm putting off writing documentation. I've been working on going through every backend to have proper (and consistent) DPI support ( rust-windowing/winit#105 (comment)), and so far X11, Windows, macOS, and iOS are done (which is when I got sidetracked, since glutin's iOS backend doesn't even compile, so I had to fix that too). Though, that event is presently never emitted on any platform other than macOS. I also think has a branch somewhere that handles HiDPIFactorChanged. Winit's X11 backend used to always report a DPI factor of 1.0 (unless you used MonitorId::get_hidpi_factor instead of Window::hidpi_factor) now both methods report the same (correct) value.Ĭhanging the hidpi_factor method in Alacritty's Window struct to always return 1.0 would be a simple temporary fix, if you want such a thing.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed